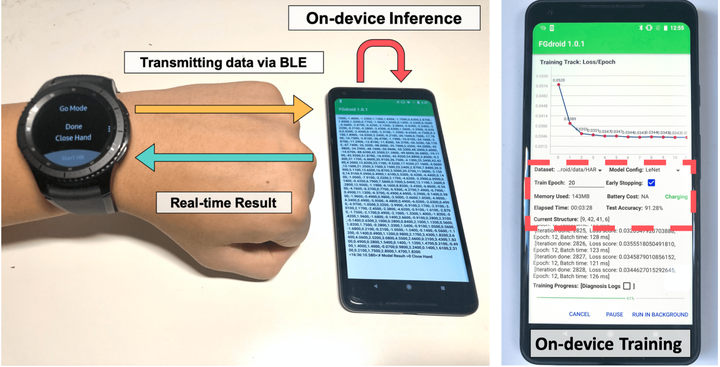

This project presents MDLdroidLite, our latest on-device deep learning framework to support privacy-preserving personal mobile sensing applications. MDLdroidLite fully operates deep learning on a single commodity smartphone for both training and inference. This is essentially achieved by a novel dynamic Fast-Grow control to transform traditional DNNs into resource-efficient model structures running on a mobile device with negligible cost. Evaluations show that MDLdroidLite outperforms existing parameter adaptation methods by speeding up training convergence 2.84× to 4.88×. The backbone models in MDLdroidLite achieve parameter reduction by 28× to 50×, FLOPs reduction by 4× to 10× over a full-sized model while keeping the same accuracy level.

This framework is the world-first on-device deep learning framework with both training and inference that is fully functioned on commercial smartphones. This platform moves an important step towards the promising mobile deep learning paradigm. We will continually improve the current version and plan to make it open-source for the public in the near future.

This work has been published in Sensys 2020.